The AI Era Demands a New Identity Vendor

The AI Era Demands a New Kind of Identity Vendor

For the talent acquisition teams in most organizations, their identity verification stack was designed to solve a compliance problem, not a security one: background checks answered whether someone had committed a crime and was permitted to work. Multi-factor authentication was an IT solution for securing employee access once the hiring was complete, and fraud signal technology was only used to flag anomalies in product use, not in recruiting workflows. Each of these tools was coherent within its original scope, and each was designed for a threat model that AI-driven impersonation has rendered obsolete.

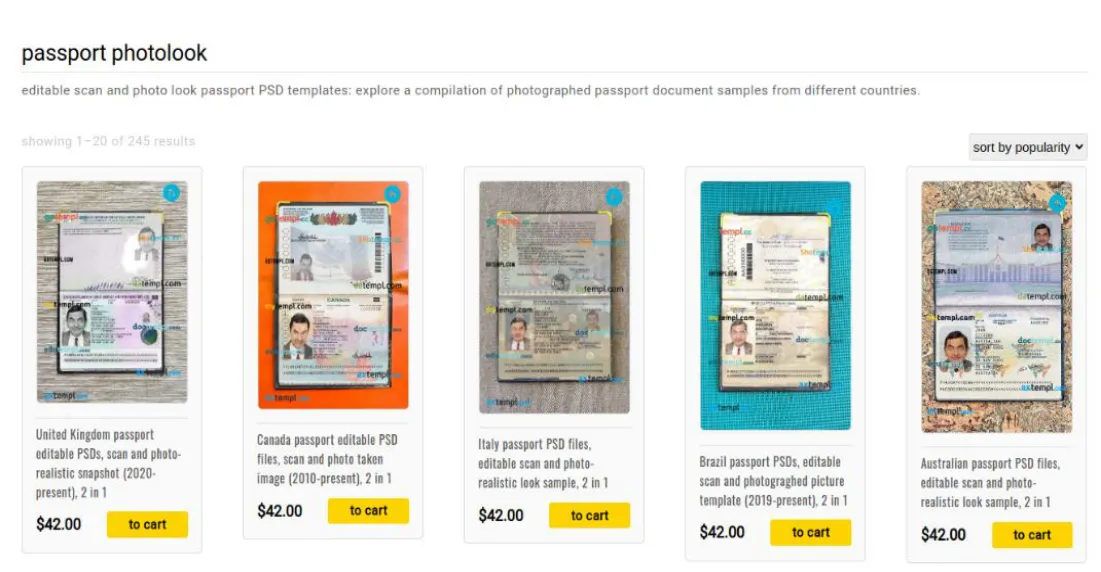

What has changed is not incremental; as we have discussed previously, the economics of document fraud have collapsed, with a convincing faceswapped ID now costing $15 and requiring no specialized skills. With the democratization of phishing tools, individual attackers and state-sponsored networks deploy these techniques against remote hiring pipelines across every industry. The controls organizations inherited were not built for the AI era, and the vendors selling those controls cannot admit it.

Why Legacy Vendors Can't Make the Shift

The old world organized identity into two separate domains, each owned by a different vendor category, and neither category is positioned to close the gap that now exists between them. Legacy IDV vendors were built for strangers and designed around compliance: they verify identity once, for a moment, against a database. Identity Platform vendors were built for insiders and designed around provisioning: they govern access for known employees operating on managed devices. The problem that AI-era impersonation creates falls between those two domains, requiring something neither vendor was architected to provide, namely the ability to bind verified identity, confirmed physical location, and ongoing operator continuity to a single person, across a workflow that spans both strangers and employees.

Legacy IDV vendors built their value around database lookups: verifying that a document number exists, that a name matches, that a record is clean. That architecture has a critical flaw: DMV databases do not expose facial images through their APIs, which means a faceswapped ID using real underlying data passes every check. The document is legitimate; the face on it is not. IDV vendors cannot acknowledge this failure publicly without undermining the product they sell.

Identity Platform vendors' domain begins after hire, where employees are known, devices are managed, and access is governed through invitation and provisioning workflows. Extending that model to unverified candidates, strangers presenting unknown hardware from unknown locations, would require abandoning the architectural assumptions their products are built on; the "invite and onboarding required" model is not a removable feature but the foundation of how these systems function.

Fraud detection vendors occupy a third category that is equally mismatched. These tools are calibrated to identify behavioral change, specifically the moment a trusted user does something anomalous, but the deception is fully in place before day one and never changes, which means behavioral anomaly detection fails entirely against a threat actor whose primary financial incentive is to remain invisible.

What the New Era Actually Requires

Closing the gap requires a different architectural foundation, built around three capabilities that none of the existing categories were designed to provide.

The first is genuine location expertise. Self-reported addresses and IP-based signals are not assurance claims but form fields. Laptop farms can present devices in any jurisdiction, and DPRK operations use exactly this infrastructure to keep their workers untraceable. What transforms location into a meaningful control is fusion with proof of physical presence, confirming that the candidate was co-located with the device at the moment of verification, a capability that only became commercially viable with optical distance bounding on ordinary smartphones.

The second is hardware roots of trust. Browser-based verification, whether on mobile or desktop, cannot produce the assurance level that today's attacks require, because the browser environment itself is unverified. Verification built on hardware attestation confirms that the device has not been tampered with and that the operator is physically present, producing a claim that a browser session cannot replicate.

The third is real-time, continuous operation without re-onboarding. As we have covered previously, every system handoff in a hiring pipeline is a trust boundary where prior assertions are accepted by convention, not by validation, and current hiring practices treat identity as a one-time credentialing event, checked at onboarding and then assumed valid indefinitely, when the employment relationship itself is where these schemes operate. The warning signs that a vendor is not built for this era are specific: verification that runs in a browser, checks measured in minutes or hours, location proofs derived from IP addresses, and a process that must be restarted from scratch for every new engagement. These are not minor limitations but design choices that were appropriate for the compliance problem the tools were built to solve, and structurally inadequate for the impersonation problem organizations now face.

Polyguard was built around location expertise, hardware roots of trust, and real-time continuous verification, the three capabilities that legacy vendors cannot add without rebuilding from scratch.\

Frequently Asked Questions

Why can't my legacy identity vendor deal with AI? Legacy IDV products were engineered for a world where creating a convincing fake ID required expertise and physical equipment. AI has made high-quality document fraud cheap and instant, and the database matching architecture that underpins most identity vendors was never designed to catch it. Updating their detection logic does not fix a structural mismatch between the tool and the threat.

What makes AI so hard for legacy vendors to deal with? AI-powered hiring fraud separates identity, location, and skills across different people, so no single check catches the full picture. Legacy vendors were each built to verify only one of those things in isolation, which is exactly the gap these attacks are designed to slip through. Solving it requires binding all three to one confirmed person, something no legacy vendor's architecture was built to do.

Should I wait for my existing vendor to catch up to AI? The financial exposure from a single fraudulent hire at a mid-sized company can run into the tens of millions of dollars before litigation is factored in. Beyond cost, legacy vendors have a commercial incentive to minimize how visible their limitations are, which makes a meaningful architectural overhaul unlikely. Waiting is itself a risk decision, not a neutral one.

AI has created new hiring fraud problems. Will my current vendor be able to solve them? Most current vendors can tell you whether a document is real. They cannot tell you whether the person presenting it is physically present, whether that same person shows up to do the work, or whether their location is what they claim. Those are the questions that matter now, and they require purpose-built tooling rather than incremental updates to compliance-era products.

AI has changed the hiring game. Do you have the right team to win it? Winning requires verifying identity, location, and operator continuity together, not separately. Any vendor that checks one at a time, relies on IP addresses for location, or treats onboarding as the final word on identity is operating with a playbook that organized impersonation schemes were specifically designed to beat.

Deepfakes have rewritten the hiring script. Are you keeping up? Liveness detection confirms that a real person is on camera. It does not confirm that the document they are holding is genuine or that their location is what they claim. When the document has been altered to match the attacker's real face, liveness detection passes cleanly, and the attack succeeds without a single deepfake video in the loop.

Want to see Polyguard in action?

Experience real-time identity verification for your communication security.